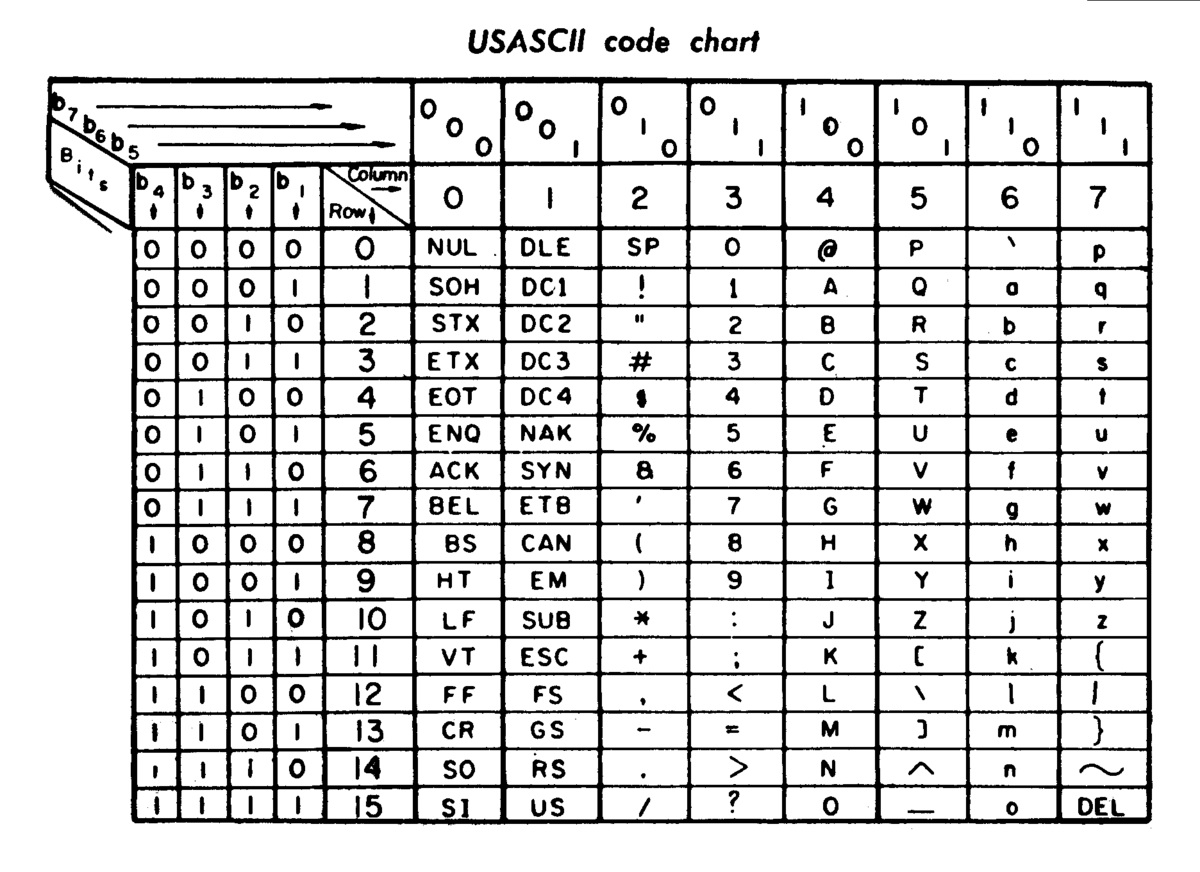

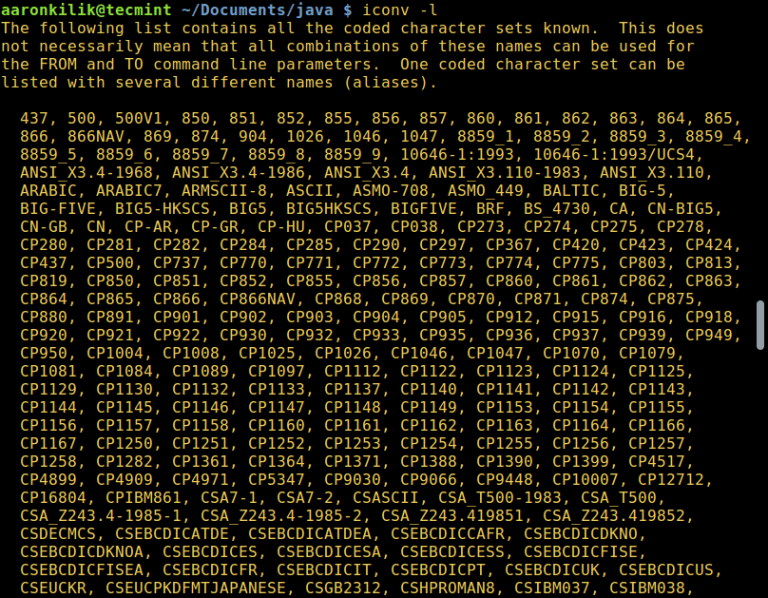

The international standard ISO 10646 defines Unicode in large parts under the name “Universal Coded Character Set.” The Unicode developers limit certain parameters for practical use, which is intended to ensure the globally uniform, compatible coding of characters and text elements. UTF-8 encoding is a transformation format within the Unicode standard. Now known as “UTF-8”, FSS-UTF was essentially completed. They successfully used the coding for their operating system and introduced it to others. They took Prosser’s idea, developed a self-synchronizing coding method (each character indicates how many bits it needs), and defined rules assigning letters that could be displayed differently in the code (example: “ä” as a separate character or “a+¨”). At Bell Labs, known for their numerous Nobel Prize winners, Unix co-founders Ken Thompson and Rob Pike worked on the Plan 9 operating system. In August of the same year, the draft was inspected by experts. The next step was Dave Prosser’s “File System Safe UCS Transformation Format” (FSS-UTF), which eliminated the ASCII character overlap. This was a major problem, since the majority of English-speaking computers were working with it at the time. A server, which was set to ASCII, sometimes output incorrect characters. UTF-1 also failed because the Unicode assignments ended up partially colliding with the existing ASCII character assignments. This requirement was not met by the first encoding called UCS-2, which simply translated the character numbers into 16-bit values.

Nevertheless, the coding should have remained compatible with ASCII.

From 1992, the IT industry consortium X/Open was also looking for a system that would replace ASCII and expand the character repertoire. Becker developed the Unicode character set for Xerox between 19.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed